英文:

What is the best practice to apply cross-validation using TimeSeriesSplit() over dataframe within end-2-end pipeline in python?

问题

Sure, here's the translated code section:

让我们假设我有以下的[数据集](https://drive.google.com/file/d/18PGLNnOI44LVFignYriBWQFW9WBkTX5c/view?usp=share_link),它以以下的[pandas]数据框格式具有一个*非标准*时间戳列,没有日期时间格式,如下所示:

+--------+-----+

|TS_24hrs|count|

+--------+-----+

|0 |157 |

|1 |334 |

|2 |176 |

|3 |86 |

|4 |89 |

... ...

|270 |192 |

|271 |196 |

|270 |251 |

|273 |138 |

+--------+-----+

274行 × 2列

我已经在分割数据**不**使用交叉验证(CV)进入*训练集*和*测试集*之后应用了一些回归算法,并获得了以下结果:

```python

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

#将时间序列数据加载为数据框

df = pd.read_csv('/content/U2996_24hrs_.csv', sep=",")

print(df.shape)

#将数据分割为训练集和测试集

from sklearn.model_selection import train_test_split

train, test = train_test_split(df, test_size=0.27, shuffle=False)

print(train.shape) #(200, 2)

print(test.shape) #(74, 2)

#可视化分割后的数据

train['count'].plot(label='训练集')

test['count'].plot(label='测试集')

plt.legend()

plt.show()

#训练和拟合模型

from sklearn.ensemble import RandomForestRegressor

rf = RandomForestRegressor().fit(train, train['count']) #X, y

rf.score(train, train['count']) #0.9998644192184375

#使用模型对测试集进行预测

predictions = rf.predict(test)

#将预测结果转换为数据框以便于绘图

df_pre = pd.DataFrame({'TS_24hrs':test['TS_24hrs'], 'count_prediction':predictions})

#计算平均绝对误差

from sklearn.metrics import mean_absolute_error

rf_mae = mean_absolute_error(test['count'], df_pre['count_prediction'])

print(train.shape) #(200, 2)

print(test.shape) #(74, 2)

print(df_pre.shape) #(74, 2)

#可视化使用回归模型的预测

train['count'].plot(label='训练集')

test['count'].plot(label='测试集')

df_pre['count_prediction'].plot(label=f'RF预测 MAE={rf_mae:.2f}')

plt.legend()

plt.show()

根据这个答案,我注意到:

> 如果您的数据已经基于时间排序,则只需在train, test = train_test_split(newdf, test_size=0.3, shuffle=False)中使用shuffle=False

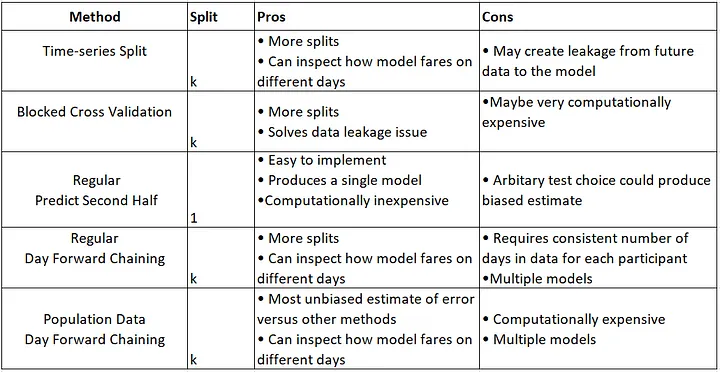

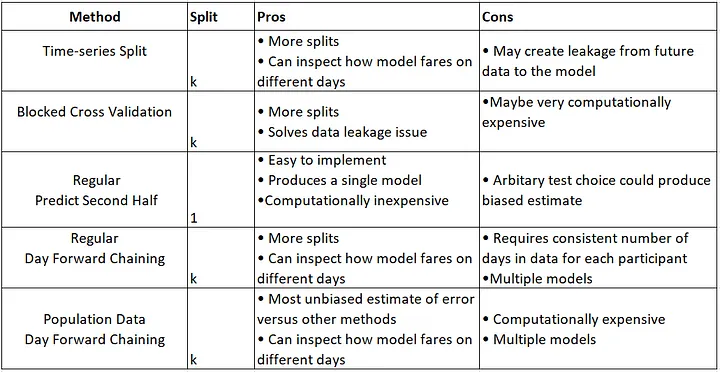

到目前为止,我已经使用了这种经典的数据拆分方法,但我想尝试一些基于时间序列的拆分方法,如下所总结:

另外,根据我的调查(请参考帖子末尾的参考资料),建议在应用回归模型之前使用交叉验证方法(K-Fold)。解释:时间序列中的交叉验证

问题: 如何使用CV方法拆分时间序列数据以获得可比较的结果?(绘制数据拆分的质量以确保/评估数据拆分的质量)

- TSS CV方法:

TimeSeriesSplit() - BTSS CV方法:

BlockingTimeSeriesSplit()

到目前为止,我考虑的最接近的解决方案是将最后的74个观测值分开作为保留测试集,然后在前面的200个观测值上执行CV。我仍然在尝试使用max_train_size=199、test_size=73等参数,但这很棘手,我无法弄清楚。实际上,我在训练RF回归器之前,对训练集(前200天/观测)应用了基于时间序列的数据拆分,然后将模型拟合到测试集(最后74天/观测)。

我尝试使用TimeSeriesSplit()如下失败:

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

#将时间序列数据加载为数据框

df = pd.read_csv('/content/U2996_24hrs_.csv', sep=",")

print(df.shape)

#尝试使用TimeSeriesSplit()方法拆分数据与CV(K-Fold)

from sklearn.model_selection import TimeSeriesSplit

tscv = TimeSeriesSplit(

n_splits=len(df['TS_24hrs'].unique()) - 1,

gap=0, #因为数据已经按24小时分组以获取每日计数,所以不需要间隔

#max_train_size=199, #在这里:https://stackoverflow.com/a/43326651/10452700中推荐设置这个参数,我不确定这是否适用于我的问题

#test_size=73,

)

for train_idx, test_idx in tscv.split(df['TS_24hrs']):

print('TRAIN: ', df.loc[df.index.isin(train_idx), 'TS_24hrs'].unique(),

'val-TEST: ', df.loc[df.index.isin(test_idx

<details>

<summary>英文:</summary>

Let's say I have [dataset](https://drive.google.com/file/d/18PGLNnOI44LVFignYriBWQFW9WBkTX5c/view?usp=share_link) within the following [tag:pandas] dataframe format with a *non-standard* timestamp column without datetime format as follows:

```None

+--------+-----+

|TS_24hrs|count|

+--------+-----+

|0 |157 |

|1 |334 |

|2 |176 |

|3 |86 |

|4 |89 |

... ...

|270 |192 |

|271 |196 |

|270 |251 |

|273 |138 |

+--------+-----+

274 rows × 2 columns

I have already applied some regression algorithms after splitting data without using cross-validation (CV) into training-set and test-set and got results like the following:

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

#Load the time-series data as dataframe

df = pd.read_csv('/content/U2996_24hrs_.csv', sep=",")

print(df.shape)

# Split the data into training and testing sets

from sklearn.model_selection import train_test_split

train, test = train_test_split(df, test_size=0.27, shuffle=False)

print(train.shape) #(200, 2)

print(test.shape) #(74, 2)

#visulize splitted data

train['count'].plot(label='Training-set')

test['count'].plot(label='Test-set')

plt.legend()

plt.show()

#Train and fit the model

from sklearn.ensemble import RandomForestRegressor

rf = RandomForestRegressor().fit(train, train['count']) #X, y

rf.score(train, train['count']) #0.9998644192184375

# Use the forest's model to predict on the test-set

predictions = rf.predict(test)

#convert prediction result into dataframe for plot issue in ease

df_pre = pd.DataFrame({'TS_24hrs':test['TS_24hrs'], 'count_prediction':predictions})

# Calculate the mean absolute errors

from sklearn.metrics import mean_absolute_error

rf_mae = mean_absolute_error(test['count'], df_pre['count_prediction'])

print(train.shape) #(200, 2)

print(test.shape) #(74, 2)

print(df_pre.shape) #(74, 2)

#visulize forecast or prediction of used regressor model

train['count'].plot(label='Training-set')

test['count'].plot(label='Test-set')

df_pre['count_prediction'].plot(label=f'RF_forecast MAE={rf_mae:.2f}')

plt.legend()

plt.show()

According this answer I noticed:

> if your data is already sorted based on time then simply use shuffle=False in

> train, test = train_test_split(newdf, test_size=0.3, shuffle=False)

So far, I have used this classic split data method, but I want to experiment with Time-series-based split methods that are summarized here:

Additionally, based on my investigation (please see the references at the end of the post), it is recommended to use the cross-validation method (K-Fold) before applying regression models. explanation: Cross Validation in Time Series

Problem: How can split time-series data with using CV methods for comparable results? (plot the quality of data split for ensure\evaluate the quality of data splitting)

- TSS CV method:

TimeSeriesSplit() - BTSS CV method:

BlockingTimeSeriesSplit()

So far, the closest solution that crossed my mind is to separate the last 74 observations as hold-on test-set a side and do CV on just the first 200 observations. I'm still struggling with playing with these arguments max_train_size=199, test_size=73 to reach desired results, but it's very tricky and I couldn't figure it out. in fact, I applied time-series-based data split using TSS CV methods before training RF regressor to train-set (first 200 days\observations) and fit model over test-set (last 74 days\observations).

I've tried recommended TimeSeriesSplit() as the following unsuccessfully:

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

#Load the time-series data as dataframe

df = pd.read_csv('/content/U2996_24hrs_.csv', sep=",")

print(df.shape)

#Try to split data with CV (K-Fold) by using TimeSeriesSplit() method

from sklearn.model_selection import TimeSeriesSplit

tscv = TimeSeriesSplit(

n_splits=len(df['TS_24hrs'].unique()) - 1,

gap=0, # since data alraedy groupedby for 24hours to retrieve daily count there is no need to to have gap

#max_train_size=199, #here: https://stackoverflow.com/a/43326651/10452700 they recommended to set this argument I'm unsure if it is the case for my problem

#test_size=73,

)

for train_idx, test_idx in tscv.split(df['TS_24hrs']):

print('TRAIN: ', df.loc[df.index.isin(train_idx), 'TS_24hrs'].unique(),

'val-TEST: ', df.loc[df.index.isin(test_idx), 'TS_24hrs'].unique())

The following figures for understanding and better alignment of split data could be part of the expected output if one could plot for each method:

expected output:

References:

-

Using k-fold cross-validation for time-series model selection

-

Cross Validation for Time Series Classification (Not Forecasting!)

Edit1:

I found 3 related posts:

- post1

- post2

> I decided to applyTimeSeriesSplit()in short TTS cv output within for loop to train\fit regression model over training-set with assist of CV-set thenpredict()over Hold-on test-set. The current output of my implementation shows slightly improvement in forecasting with or without, which could be due to problems in my implementation.

#Load the time-series data as dataframe

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

df = pd.read_csv('/content/U2996_24hrs_.csv', sep=",")

#print(df.shape) #(274, 2)

#####----------------------------without CV

# Split the data into training and testing sets

from sklearn.model_selection import train_test_split

train, test = train_test_split(df, test_size=0.27, shuffle=False)

print(train.shape) #(200, 2)

print(test.shape) #(74, 2)

#visulize splitted data

#train['count'].plot(label='Training-set')

#test['count'].plot(label='Test-set')

#plt.legend()

#plt.show()

#Train and fit the model

from sklearn.ensemble import RandomForestRegressor

rf = RandomForestRegressor().fit(train, train['count']) #X, y

rf.score(train, train['count']) #0.9998644192184375

# Use the forest's model to predict on the test-set

predictions = rf.predict(test)

#convert prediction result into dataframe for plot issue in ease

df_pre = pd.DataFrame({'TS_24hrs':test['TS_24hrs'], 'count_prediction':predictions})

# Calculate the mean absolute errors

from sklearn.metrics import mean_absolute_error

rf_mae = mean_absolute_error(test['count'], df_pre['count_prediction'])

#####----------------------------with CV

df1 = df[:200] #take just first 1st 200 records

#print(df1.shape) #(200, 2)

#print(len(df1)) #200

from sklearn.model_selection import TimeSeriesSplit

tscv = TimeSeriesSplit(

n_splits=len(df1['TS_24hrs'].unique()) - 1,

#n_splits=3,

gap=0, # since data alraedy groupedby for 24hours to retrieve daily count there is no need to to have gap

#max_train_size=199,

#test_size=73,

)

#print(type(tscv)) #<class 'sklearn.model_selection._split.TimeSeriesSplit'>

#mae = []

cv = []

TS_24hrs_tss = []

predictions_tss = []

for train_index, test_index in tscv.split(df1):

cv_train, cv_test = df1.iloc[train_index], df1.iloc[test_index]

#cv.append(cv_test.index)

#print(cv_train.shape) #(199, 2)

#print(cv_test.shape) #(1, 2)

TS_24hrs_tss.append(cv_test.values[:,0])

#Train and fit the model

from sklearn.ensemble import RandomForestRegressor

rf_tss = RandomForestRegressor().fit(cv_train, cv_train['count']) #X, y

# Use the forest's model to predict on the cv_test

predictions_tss.append(rf_tss.predict(cv_test))

#print(predictions_tss)

# Calculate the mean absolute errors

#from sklearn.metrics import mean_absolute_error

#rf_tss_mae = mae.append(mean_absolute_error(cv_test, predictions_tss))

#print(rf_tss_mae)

#print(len(TS_24hrs_tss)) #199

#print(type(TS_24hrs_tss)) #<class 'list'>

#print(len(predictions_tss)) #199

#convert prediction result into dataframe for plot issue in ease

import pandas as pd

df_pre_tss1 = pd.DataFrame(TS_24hrs_tss)

df_pre_tss1.columns =['TS_24hrs_tss']

#df_pre_tss1

df_pre_tss2 = pd.DataFrame(predictions_tss)

df_pre_tss2.columns =['count_predictioncv_tss']

#df_pre_tss2

df_pre_tss= pd.concat([df_pre_tss1,df_pre_tss2], axis=1)

df_pre_tss

# Use the forest's model to predict on the hold-on test-set

predictions_tsst = rf_tss.predict(test)

#print(len(predictions_tsst)) #74

#convert prediction result of he hold-on test-set into dataframe for plot issue in ease

df_pre_test = pd.DataFrame({'TS_24hrs_tss':test['TS_24hrs'], 'count_predictioncv_tss':predictions_tsst})

# Fix the missing record (1st record)

df_col_merged = df_pre_tss.merge(df_pre_test, how="outer")

#print(df_col_merged.shape) #(273, 2) 1st record is missing

ddf = df_col_merged.rename(columns={'TS_24hrs_tss': 'TS_24hrs', 'count_predictioncv_tss': 'count'})

df_first= df.head(1)

df_merged_pred = df_first.merge(ddf, how="outer") #insert first record from original df to merged ones

#print(df_merged_pred.shape) #(274, 2)

print(train.shape) #(200, 2)

print(test.shape) #(74, 2)

print(df_pre_test.shape) #(74, 2)

# Calculate the mean absolute errors

from sklearn.metrics import mean_absolute_error

rf_mae_tss = mean_absolute_error(test['count'], df_pre_test['count_predictioncv_tss'])

#visulize forecast or prediction of used regressor model

train['count'].plot(label='Training-set', alpha=0.5)

test['count'].plot(label='Test-set', alpha=0.5)

#cv['count'].plot(label='cv TSS', alpha=0.5)

df_pre['count_prediction'].plot(label=f'RF_forecast MAE={rf_mae:.2f}', alpha=0.5)

df_pre_test['count_predictioncv_tss'].plot(label=f'RF_forecast_tss MAE={rf_mae_tss:.2f}', alpha=0.5 , linestyle='--')

plt.legend()

plt.title('Plot forecast results with & without cross-validation (K-Fold)')

plt.show()

- post3 sklearn

> (I couldn't implement it, one can try this) usingmake_pipeline()and usedef evaluate(model, X, y, cv):function but still confusing if I want to collect the results in the form of dataframe for visualizing case and what is the best practice to pass cv result to regressor and compare the results.

Edit2:

In the spirit of DRY, I tried to build an end-to-end pipeline without/with CV methods, load a dataset, perform feature scaling and supply the data into a regression model:

#Load the time-series data as dataframe

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

df = pd.read_csv('/content/U2996_24hrs_.csv', sep=",")

#print(df.shape) #(274, 2)

#####--------------Create pipeline without CV------------

# Split the data into training and testing sets for just visualization sense

from sklearn.model_selection import train_test_split

train, test = train_test_split(df, test_size=0.27, shuffle=False)

print(train.shape) #(200, 2)

print(test.shape) #(74, 2)

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import MinMaxScaler

from sklearn.ensemble import RandomForestRegressor

from sklearn.pipeline import Pipeline

# Split the data into training and testing sets without CV

X = df['TS_24hrs'].values

y = df['count'].values

print(X_train.shape) #(200, 1)

print(y_train.shape) #(200,)

print(X_test.shape) #(74, 1)

print(y_test.shape) #(74,)

# Here is the trick

X = X.reshape(-1,1)

X_train, X_test, y_train, y_test = train_test_split(X, y , test_size=0.27, shuffle=False, random_state=0)

print(X_train.shape) #(200, 1)

print(y_train.shape) #(1, 200)

print(X_test.shape) #(74, 1)

print(y_test.shape) #(1, 74)

#build an end-to-end pipeline, and supply the data into a regression model. It avoids leaking the test set into the train set

rf_pipeline = Pipeline([('scaler', MinMaxScaler()),('RF', RandomForestRegressor())])

rf_pipeline.fit(X_train, y_train)

#Displaying a Pipeline with a Preprocessing Step and Regression

from sklearn import set_config

set_config(display="diagram")

rf_pipeline # click on the diagram below to see the details of each step

r2 = rf_pipeline.score(X_test, y_test)

print(f"RFR: {r2}") # -0.3034887940244342

# Use the Randomforest's model to predict on the test-set

y_predictions = rf_pipeline.predict(X_test.reshape(-1,1))

#convert prediction result into dataframe for plot issue in ease

df_pre = pd.DataFrame({'TS_24hrs':test['TS_24hrs'], 'count_prediction':y_predictions})

# Calculate the mean absolute errors

from sklearn.metrics import mean_absolute_error

rf_mae = mean_absolute_error(y_test, df_pre['count_prediction'])

print(train.shape) #(200, 2)

print(test.shape) #(74, 2)

print(df_pre.shape) #(74, 2)

#visulize forecast or prediction of used regressor model

train['count'].plot(label='Training-set')

test['count'].plot(label='Test-set')

df_pre['count_prediction'].plot(label=f'RF_forecast MAE={rf_mae:.2f}')

plt.legend()

plt.title('Plot results without cross-validation (K-Fold) using pipeline')

plt.show()

#####--------------Create pipeline with TSS CV------------

#####--------------Create pipeline with BTSS CV------------

The results got worse using the pipeline, based on MAE score comparing implementation when separating the steps outside of the pipeline!

答案1

得分: 1

以下是翻译的代码部分:

rf_pipeline = Pipeline([('scaler', MinMaxScaler()),('RF', RandomForestRegressor())])

fold_err = []

for i in range(0, 200, 50):

X_train = X[i:i+50]

y_train = y[i+50:i+100]

rf_pipeline.fit(X_train, y_train)

y_predictions = rf_pipeline.predict(X_test)

fold_err.append(mean_absolute_error(y_test, y_predictions))

print(err)

# calculate MSE of fold_err and decide if the model performs good enough

# inspect the variance. if it is too high, the model is unstable

如果还有其他需要翻译的部分,请告诉我。

英文:

First, you should not be afraid when results are getting worse, as your goal should be getting the true model performance evaluation. The cross-validation procedure enables one to understand how robust the model is by taking the same model, training it on different, but comparable chunks of data and then testing it on different, but still comparable pieces of data. Then you analyze the variance of the results and if it is low enough, you know that the model with given parameters is robust enough to be used for the unseen data in the future.

This means your test sets should be equal in size. Usually, the size is defined by the problem requirements.

For TSS training, data chunks are different in size. So your model is trained on different data size in each fold. From such an experiment, you can learn optimal training set size, but it is harder to evaluate the model performance. Sometimes models become better as the training set grows, but it is usually not the case.

Now for BTSS, the sizes of training and test sets for each fold are equal, so this can be used for evaluating model quality, as you are comparing apples to apples. And, by the way, it is always a good practice to check that the statistical distribution of your data is stable.

Once we cleared this, creating folds is rather simple. Set your train/test sizes to (say) 150/50 with stride 15. Then you will get 6 folds:

Train_idx Test_idx

[0:150] [150:200]

[15:165] [165:215]

[30:180] [180:230]

[45:195] [195:245]

[60:210] [210:260]

[75:225] [225:275] *The last fold will have a test set which is insignificantly smaller.

It would be much better to create non-overlapping training sets for each fold, as your model will learn from different data and the results of each fold would be completely independent, but obviously, it is not always possible. In your case, if your model lives well with a training set of size 50, then folds like the below would provide a better performance estimate:

Train_idx Test_idx

[0:50] [50:100]

[50:100] [100:150]

[100:150] [150:200]

[150:200] [200:250]

Let's take the last split and put it into practice:

rf_pipeline = Pipeline([('scaler', MinMaxScaler()),('RF', RandomForestRegressor())])

fold_err = []

for i in range(0, 200, 50):

X_train = X[i:i+50]

y_train = y[i+50:i+100]

rf_pipeline.fit(X_train, y_train)

y_predictions = rf_pipeline.predict(X_test)

fold_err.append(mean_absolute_error(y_test, y_predictions))

print(err)

# calculate MSE of fold_err and decide if the model performs good enough

# inspect the variance. if it is too high, the model is unstable

答案2

得分: 0

以下是翻译好的部分:

考虑到评论中的争论并在 @igrinis 的协助下,在 Edit1/post2 中找到一个可能的解决方案,我提出了以下实现来:

- 满足声明的预测策略:

> ... 训练 RF 回归器以在 训练集 (前 200 天/观察) 上进行训练并在 测试集 (最后 74 天/观察) 上拟合模型。 - 使用 TSS 类:

TimeSeriesSplit()

类似地,在循环中可以使用 BTSS 类来在管道中训练模型。最终预测的可视化如下:

注意:我计算拆分(K-折)的平均值:np.mean(rf_mae_test_tss) 并在图例中反映出来。

英文:

Considering the argues in the comments and assist of @igrinis and found a possible solution addressed in Edit1/post2, I came up with the following implementation to:

- meet the declared forecasting strategy:

> ... training RF regressor to train-set (first 200 days\observations) and fit model over test-set (last 74 days\observations). - use TSS class:

TimeSeriesSplit()

#Load the time-series data as dataframe

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

df = pd.read_csv('/content/U2996_24hrs_.csv', sep=",")

# Select the first 200 observations

df200 = df[:200]

# Split the data into training and testing sets

from sklearn.model_selection import train_test_split

#X_train, X_test, y_train, y_test = train_test_split(X, y , test_size=0.27, shuffle=False, random_state=0)

train, validation = train_test_split(df200 , test_size=0.2, shuffle=False)

test = df[200:]

#print(train.shape) #(160, 2)

#print(validation.shape) #(40, 2)

#print(test.shape) #(74, 2) #hold-on (unseen data)

#Train and fit the RF model

from sklearn.ensemble import RandomForestRegressor

#rf_model = RandomForestRegressor().fit(train, train['count']) #X, y

# calculate R2 score using model

#r2_train = rf_model.score(train, train['count'])

#print(f"RFR_train: {r2_train:.4f}") #RFR_train: 0.9995

#r2_validation = rf_model.score(validation, validation['count'])

#print(f"RFR_val: {r2_validation:.4f}") #RFR_val: 0.9972

#r2_test = rf_model.score(test, test['count'])

#print(f"RFR_test: {r2_test:.4f}") #RFR_test: 0.5967

# Use the forest's model to predict on the validation-set and test-set

#predictions_val = rf_model.predict(validation)

#predictions_test = rf_model.predict(test)

#build an end-to-end pipeline, and supply the data into a regression model and train within pipeline. It avoids leaking the test\val-set into the train-set

from sklearn.preprocessing import MinMaxScaler

from sklearn.ensemble import RandomForestRegressor

from sklearn.pipeline import Pipeline, make_pipeline

rf_pipeline = Pipeline([('scaler', MinMaxScaler()),('RF', RandomForestRegressor())]).fit(train, train['count']) #X, y

#Displaying a Pipeline with a Preprocessing Step and Regression

from sklearn import set_config

set_config(display="text")

#print(rf_pipeline) # Pipeline(steps=[('scaler', MinMaxScaler()), ('RF', RandomForestRegressor())])

# calculate R2 score using pipeline

#r2_train = rf_pipeline.score(train, train['count'])

#print(f"RFR_train: {r2_train:.4f}") #RFR_train: 0.9995

#r2_validation = rf_pipeline.score(validation, validation['count'])

#print(f"RFR_val: {r2_validation:.4f}") #RFR_val: 0.9972

#r2_test = rf_pipeline.score(test, test['count'])

#print(f"RFR_test: {r2_test:.4f}") #RFR_test: 0.5967

# Use the pipeline to predict over the validation-set and test-set

y_predictions_val = rf_pipeline.predict(validation)

y_predictions_test = rf_pipeline.predict(test)

from sklearn.model_selection import TimeSeriesSplit

tscv = TimeSeriesSplit(n_splits = 5)

rf_pipeline_tss = Pipeline([('scaler', MinMaxScaler()),('RF', RandomForestRegressor())])

rf_mae_test_tss = []

tss_cv_test_index = []

for train_index, test_index in tscv.split(df200):

cv_train, cv_test = df200.iloc[train_index], df200.iloc[test_index]

#print(f"cv_train: {cv_train.shape}")

#print(f"cv_test: {cv_test.shape}")

#print(f"cv_test_index: {cv_test.index}")

rf_pipeline_tss.fit(cv_train, cv_train['count'])

predictions_tss = rf_pipeline_tss.predict(cv_test)

rf_mae_test_tss.append(mean_absolute_error(cv_test['count'], predictions_tss))

tss_cv_test_index.append(list(cv_test.index))

print(rf_mae_test_tss)

print(tss_cv_test_index)

# Use the TSS-based pipeline to predict over the hold-on (unseen) test-set

y_predictions_test_tss = rf_pipeline_tss.predict(test)

Similarly, one can use BTSS class within the for-loop to train the model in the pipeline. The following visualisation of the final forecast :

Note: I calculate the mean of splits (K-folds): np.mean(rf_mae_test_tss) and reflect in legend in the plot.

通过集体智慧和协作来改善编程学习和解决问题的方式。致力于成为全球开发者共同参与的知识库,让每个人都能够通过互相帮助和分享经验来进步。

评论